Techletter #88 | August 31, 2024

Before designing a rate limiter, we need to understand what a rate limiter is. Why is it required?

So what is a rate limiter?

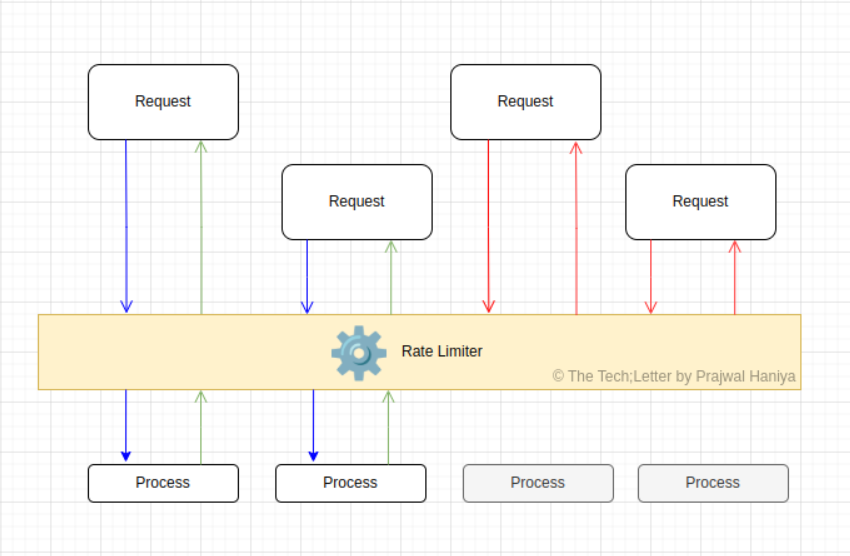

A rate limiter is a component or tool designed to control the rate at which requests are processed or executed in a system.

Rate limiters function by setting the maximum number of requests that a user or client can make within a specified time frame. If this limit is exceeded, subsequent requests may be denied or delayed until the limit resets.

Client-side and server-side rate limiting are two approaches to controlling the number of requests made to an API or server, each with distinct characteristics and applications.

Client-side rate limiting is implemented within the client application. This means that the client is responsible for tracking and enforcing the rate limits on its own requests to the server. Some of the advantages are immediate feedback and reduced server load. There are cons too, security risks, and inconsistent enforcement.

Server-side rate limiting is implemented on the server, where it monitors and controls the number of requests from clients. This method is generally more robust and secure.

How to create a rate limiter? A summary

- Create a rate-limiting middleware.

- Status code 429 indicates that the user has sent too many requests

- The rate limiter is usually implemented within a component called API gateway

- Use a rate-limiting algorithm

- It is usually necessary to have different buckets for different API endpoints (Token Bucket)

- Usage of queue (Leaky bucket)

// Token Bucket Algorithm

class TokenBucket {

constructor(capacity) {

this.capacity = capacity;

this.tokens = capacity;

this.lastRefill = Date.now();

setInterval(() => {

// the interval at which the bucket will be filled can be dynamic

this.refill();

}, 10 * 1000);

}

consume(tokens = 1) {

if (this.tokens >= tokens) {

this.tokens -= tokens;

console.log('Consumed', tokens);

console.log('Remaining tokens:', this.tokens);

return true;

} else {

console.log('No Tokens available to consume. Please try again later');

return false;

}

}

refill() {

const now = Date.now();

console.log('Refilling tokens');

const timePassed = now - this.lastRefill;

const tokensToAdd = this.capacity - this.tokens;

console.log(tokensToAdd);

this.tokens = Math.min(this.capacity, this.tokens + tokensToAdd);

this.lastRefill = now;

console.log('Total Available tokens after refill: ', this.tokens)

}

}

const bucket = new TokenBucket(20, 10);

setInterval(() => {

bucket.consume(5);

}, 3000);

- Need a counter to keep track of how many requests are sent from the same user and IP address.

- Rate limiting rules

- Rate limiter headers: X-Ratelimit-Remaining, X-Ratelimit-Limit, X-Ratelimit-Retry-After

- Rate-limiting rules are stored in the cache, workers frequently pull rules from the disk and store them in the cache.

- There are two challenges for a rate limiter in a distributed system:

- Race condition:

- Locks are the most obvious way to solve race conditions, but locks will hit the system’s performance

- Other solutions

- Lua script

- Sorted sets data structure in Redis

- Synchronization issue

- Use sticky session (Not advised as it is not scalable)

- Use centralized data stores like Redis

- Race condition:

- Performance optimization of a rate-limiter

- Rate limiters are amazing for day-to-day operations, but during incidents (for example, if a service is operating more slowly than usual), we sometimes need to drop low-priority requests to make sure that more critical requests get through. This is called load shedding. It happens infrequently, but it is an important part of keeping Stripe available.